Welcome to Tianao Zhang’s (张天骜) personal website!

I am currently a junior undergraduate student at Shanghai Jiao Tong University (SJTU), majoring in Engineering Mechanics.

I am fortunate to be advised by Prof. Yulun Zhang and Prof. Xiaokang Yang from the School of Computer Science, Shanghai Jiao Tong University (SJTU).

My research focuses on model compression and acceleration for Large Language Models (LLMs) and Diffusion Models (dLLMs), with an emphasis on techniques such as binarization and post-training quantization. I am dedicated to building powerful yet resource-efficient AI systems that push the boundaries of computational efficiency. ⚡

I am always open to collaborations and academic discussions! Feel free to reach out via email at ztaztazta2785@gmail.com or via WeChat (ID:ZTAZTAZTAZTAZTA).

🔥 News

- 2026.01: 🎉🎉 Our paper Quant-dLLM and PT2-LLM have been accepted to ICLR 2026!

- 2025.11: 🎉🎉 Our team was awarded the Grand Prize at the National “Challenge Cup” Competition (挑战杯全国特等奖) for the project “Binarization Compression Technology and Application of Large Models for Edge Deployment”!

- 2025.06: 🎉🎉 Our team was awarded the Grand Prize at the Shanghai “Challenge Cup” Competition (挑战杯上海市特等奖) for the project “Novel Binarization Compression Technology for Large Language Models”!

- 2025.01: 🎉🎉 Our paper ARB-LLM has been accepted to ICLR 2025!

📝 Publications

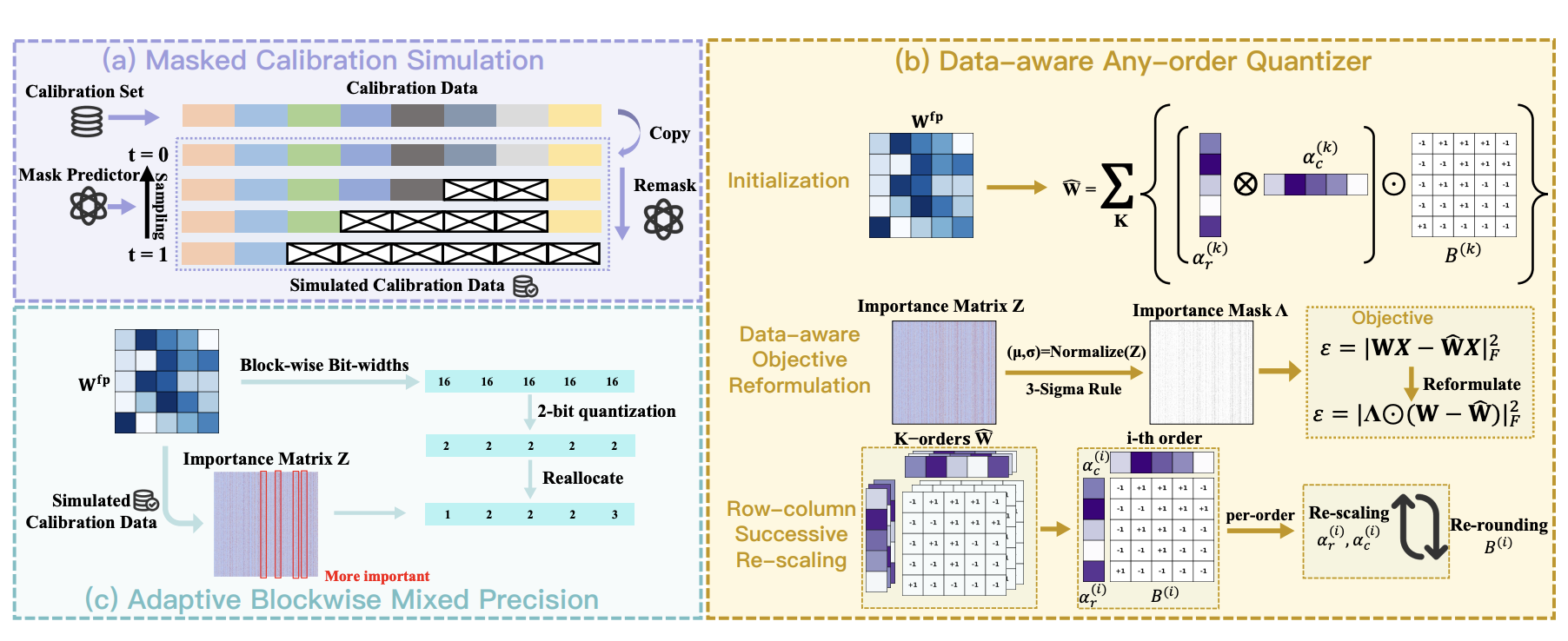

Quant-dLLM: Post-Training Extreme Low-Bit Quantization for Diffusion Large Language Models

Tianao Zhang1, Zhiteng Li1, Xianglong Yan, Haotong Qin, Yong Guo, and Yulun Zhang*

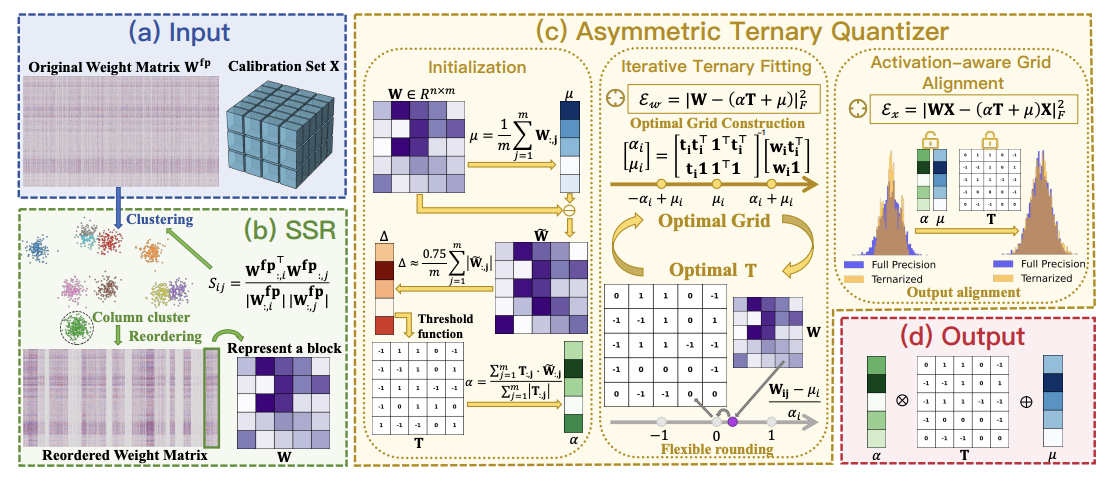

PT2-LLM: Post-Training Ternarization for Large Language Models

Xianglong Yan1, Chengzhu Bao1, Zhiteng Li, Tianao Zhang, Kaicheng Yang, Haotong Qin, Ruobing Xie, Xingwu Sun, and Yulun Zhang*

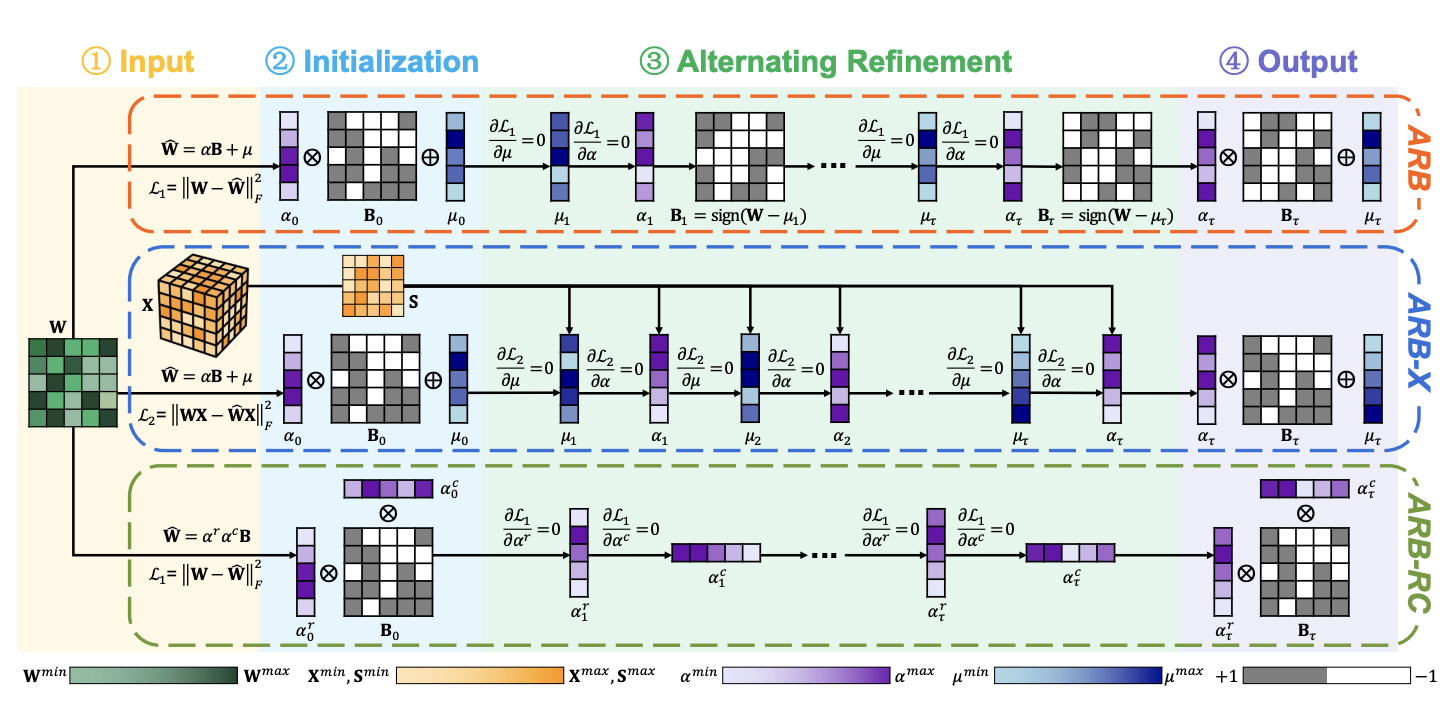

ARB-LLM: Alternating Refined Binarizations for Large Language Models

Zhiteng Li1, Xianglong Yan1, Tianao Zhang, Haotong Qin, Dong Xie, Jiang Tian, Zhongchao Shi, Linghe Kong*, Yulun Zhang*, and Xiaokang Yang

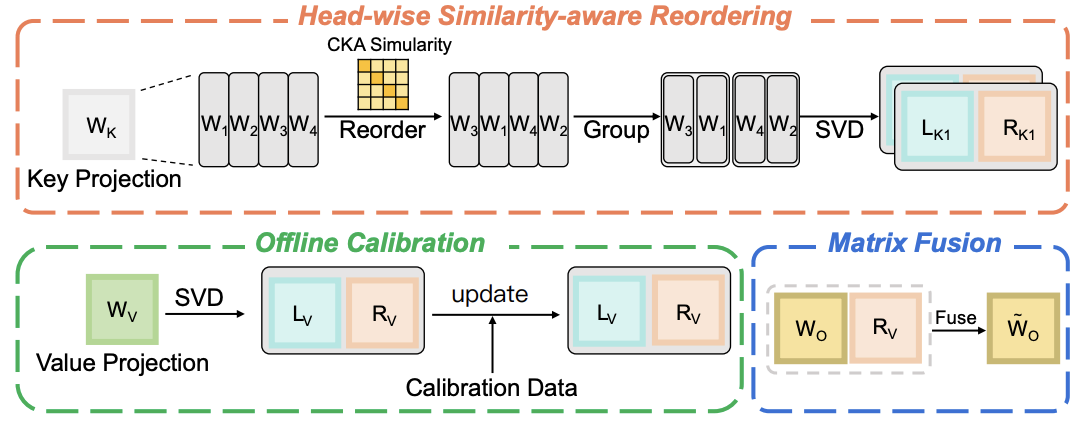

ReCalKV: Low-Rank KV Cache Compression via Head Reordering and Offline Calibration

Xianglong Yan1, Zhiteng Li1, Tianao Zhang, Haotong Qin, Linghe Kong, Yulun Zhang*, and Xiaokang Yang

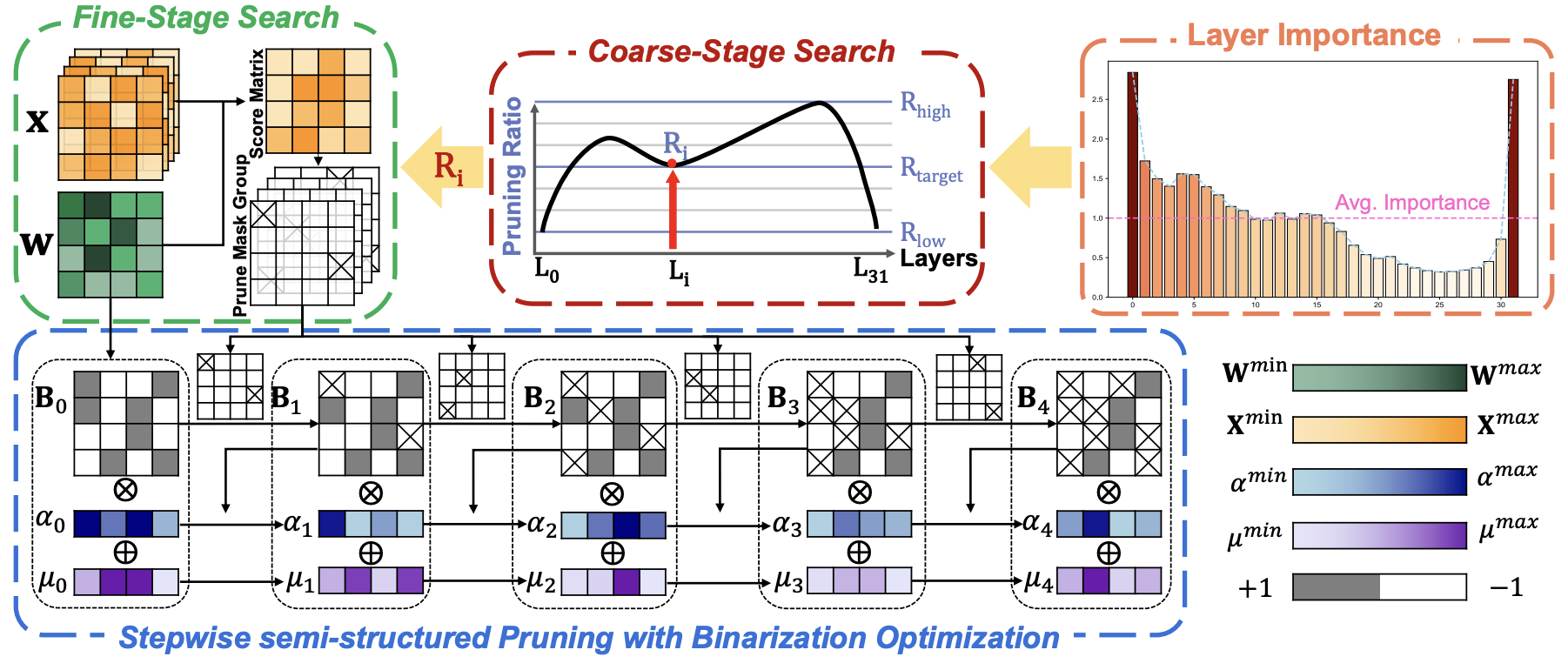

Progressive Binarization with Semi-Structured Pruning for LLMs

Xianglong Yan1, Tianao Zhang1, Zhiteng Li, Haotong Qin, and Yulun Zhang*

🎖 Honors and Awards

- Tongsheng Scholarship (Top 1%) 同声奖学金 (2025)

- Han Ying Ju Hua Special Achievements Scholarship (Top 1%) 含英咀华专项成就奖学金 (2025)

- Zhiyuan Honors Scholarship (Top 5%) 致远荣誉奖学金 (2023,2024,2025)

- Excellent Undergraduate Scholarship (Top 10%) 本科生优秀奖学金 (2024, 2025)

📖 Educations

- 2023.09 - now, B.Eng. in Shanghai Jiao Tong University, Shanghai, China.

- 2020.09 - 2023.06, High School, The Experimental High School Attached to Beijing Normal University, Beijing, China.

🤝 Academic Service

- Reviewer of ICLR 2026.

💻 Internships

- 2025.7 - 2025.12, AI Algorithm Engineer, Huawei, Shanghai, China.